In RadOS things go as follow: you log into your OS account, and all of a sudden, an MS Office Assistant-like fella appears to tell you that there's a restart coming your way, and you need to save. Mac OS X & macOS names. As you can see from the list above, with the exception of the first OS X beta, all versions of the Mac operating system from 2001 to 2012 were all named after big cats.

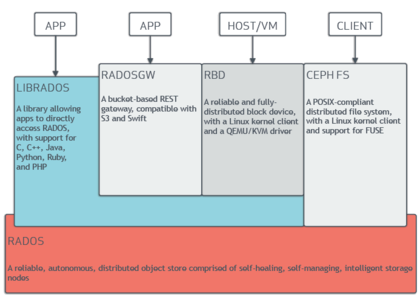

CEPH is a free and opensource object storage system. This article describes the basic terminology, installation and configuration parameters required to build your own CEPH environment.

CEPH has been designed to be a distributed storage system which is highly fault tolerant, scalable and configurable. It can run on large number of distributed commodity hardware thus eliminating the need for very large central storage solutions.

This post aims to be a basic, short and self contained article that explains all the key details to understand and play with CEPH.

Environment

I've setup CEPH on my laptop with a few LXC containers. My setup has –

- Host: Ubuntu 16.04 xenial 64-bit OS

- LXC version: 2.0.6

- 40G disk partition that is free to use for this experiement.

- CEPH Release – Jewel

The Ubuntu site has documentation on LXC configuration here. But this site talks about creating unprivileged containers. However, we'll need 'privileged' containers. Privileged containers are't considered secure, since processes are mapped to root user on the host. Hence it has been used only for the purpose of experimenting.

See documentation on LXC site here to understand how to create privileged LXC containers.

Now, create these containers (as privileged) with names like these –

- cephadmin

- cephmon

- cephosd0

- cephosd1

- cephosd2

- cephradosgw

Here's a command to create a container with Ubuntu Xenial 64-bit container. You could choose another distro, in which case some of the instructions might not be applicable. Using the names above run the command to create each container –

Though CEPH installations talk about private and public networks. For testing purposes, the ‘lxcbr0' available from LXC is sufficient to successfully work with CEPH.

Start the containers and install SSH servers on all of them –

Repeat the above commands for all the containers you've created. Re-start all the containers. Register the container-names into your /etc/hosts for easily being able to login to them.

Disk Setup

On the 40G disk partition you've allocated for this exercise perform –

- Delete the existing partition

- Create 3 new partitions

- Format each of them with ‘XFS' filesystem.

CEPH recommends using XFS / BTRFS / EXT4. I've used XFS in my tests. I tried with EXT4 but received warnings related to limited xattr sizes while CEPH was being deployed.

Note down the device major and minor numbers for the partitions you've created from the above steps.

Make each of the partition you've created available to each of the cephosd0, cephosd1 and cephosd2 containers. If the above operation resulted in ‘/dev/sda8', ‘/dev/sda9' and ‘/dev/sda10' devices. Assign each of the device major and minor number to a corresponding cephosd container. To do that, you'll need to edit the LXC configuration file for each of the cephosd* container by performing the steps –

- Login to each cephosd node, and run

- Stop your cephosd containers (cephosd0, cephosd1, cephosd2) –

- On the host create corresponding ‘fstab' file for each container. You'd assign /dev/sda8 to cephosd0, /dev/sda9 to cephosd1 and so on. The syntax of fstab would have lines like this depending on how many disks / partitions you intend to share –

Example –

IMPORTANT NOTE

The has no preceding forward slash. The preceding slash is not required. Refer this post on askubuntu.com for more details.

- For each of the cephosd node edit the configuration file

Edit the container configuration file ‘config'. The lines below gives the container permission to access the device inside LXC and mount it to the mount point defined in the fstab file. Add the following lines –

Now, start/restart the containers. With the above set of steps we complete the creation of containers ready for us to install CEPH.

CEPH Installation

Complete the pre-flight steps on the CEPH quick install from here. The steps in pre-flight –

- Setup the ‘cephadmin' node with the ceph-deploy package.

- Installs ‘ntp' on all the nodes (required where OSD or MON run).

- Create a common ceph deploy user (password-less ssh sudo access) that will be used for CEPH installation on all nodes.

After the pre-flight steps are complete check –

- To ensure the password-less access works from ‘cephadmin' to all the other nodes on your cluster via the ceph deploy user you have created.

- The XFS partition created earlier is available on all OSDs under /mnt/xfsdisk.

Next, you need to complete the CEPH deployment. The steps with all the illustrations are available here. To get a good understanding of each command read the description from the link. Since our experiement has 3 OSDs and 3 monitor daemons; You could run these commands from the ‘cephadmin' node using the ceph deploy user created in pre-flight –

- Create a directory and run the commands below from within it.

- Designate following nodes as CEPH Monitors

- Install CEPH on all nodes including admin node.

- Add the initial monitors and gather their keys

Rados Mac Os Download

- Prepare the disk on each OSD

- Activate each OSD

- Run this command to ensure cephadmin can perform administrative activities on all your nodes on the CEPH cluster

- Check health of cluster (You must see HEALTH_OK) if you've performed all the steps correctly.

- Install and deploy the instance of RADOS gateway

NOTE

If you haven't configured the OSD daemons to start automatically via upstart, they won't after a LXC startup/restart. And running ‘ceph -s' or ‘ceph -w' or ‘ceph health' on admin node shows HEALTH_ERR and degraded cluster since the OSDs are down. In such a case, manually login to each OSD and start the OSD instance with the command sudo systemctl start ceph-osd@; in our case is 0, 1, or 2.

With the above steps, the cluster must be up with all the PGs showing ‘active + clean' status;

Access data on cluster

To run these commands you'll need a subset ceph.conf with monitor node information and admin keyring file. For test purposes you could run it on the cephadmin node under the cluster directory you've created in the first step of CEPH installation section.

To list all available data pools

To create a pool

Rave mac os. To insert data in a file into a pool

Slimefrog mac os. To list all objects in a pool

To get a data object from the pool

The horologists legacy mac os. Go Up to Steps in Creating Multi-Device Applications

A software development kit (SDK) provides a set of files to build applications for a target platform, and defines the actual location of those files on the target platform or an intermediate platform that supports the target platform.

The macOS platform provides SDKs for the following target platforms that RAD Studio supports:

- macOS

- 64-bit iOS Device

- 32-bit iOS Device

- iOS Simulator

When you develop either Delphi or C++ applications for one or more of these target platforms, you must use the SDK Manager to add to RAD Studio an SDK for each target platform.

To add a new macOS or iOS SDK to your development system from a Mac:

- Select Tools > Options > Deployment > SDK Manager.

- Click the Add button.

- On the Add a New SDK dialog box, select a platform from the Select a platform drop-down list.

- The items in the Select a profile to connect drop-down list are filtered by the selected platform.

- Select a connection profile from the Select a profile to connect drop-down list, or select Add New to open the Create a Connection Profile wizard and create a new connection profile for the selected platform.

- The Select an SDK version drop-down list displays the SDK versions available on the target machine that is specified in the chosen connection profile.

- Select an SDK from the Select an SDK version drop-down list. For details and troubleshooting, see SDK Manager.

- Note:

- RAD Studio does not support versions of the iOS SDK lower than 8.0.

- iOS applications built with a given SDK version may only run on that version or later versions of iOS. For example, an application built with version 9 of the iOS SDK might crash on a device running iOS 8.

- Check Mark the selected SDK active if you want the new SDK to be the default SDK for the target platform.

- Click OK to save the new SDK.

The files from the remote machine are pulled into the development system, so you can keep a local file cache of the selected SDK version. The local file cache can be used to build your applications for the SDK target platform.

After you create an SDK, you may change the local directory of your development system where RAD Studio stores its files.

If you do not create an SDK in advance, you can add one the first time you deploy an application to the remote machine. The Add a New SDK window appears where you can select an SDK version.